I was anticipating the migration of one of the projects I work for as CTO, from Microsoft Azure, cause #AzureDown issue, and I published a tweet about it.

One Google guy invited me to check Google Cloud and I asked for a voucher to test the newly added features of the platform and publishing the results at cmips.

I tested Google when I was in charge of Easy Cloud Managed project, but at that time Google had the App Engine, but not the Compute Engine.

He put me in contact with three wonderful Engineers that talked to me about some de facto standard software for performance benchmarking, and shown me some statistics from other projects. I explained that I wanted to test the real use that applications like Apache or Mysql do, and that I want to detect Clouds that outsell their memory (like 10% or more in excess) and so for real the host swaps and the guest instances are slow, I explained how I test, the most real for the Start ups needs, and why I wrote cmips (source code) from the scratch, with multi thread and only CPU + RAM speed computation operations, and no system calls that could distort the results (hypervisors do many tricks to improve performance).

Like Amazon, Google offers a lot of tools and services. I like specially the possibility to have your code and Google scaling to the number of Servers you need, transparently, in the same architecture they use with Google App. But because good Engineers are Software Artisans, that require full control and using very custom solutions for the requirements of the Start ups, I will focus on Google Compute Engine, that is the equivalent to Virtual Machines/Instances, that is what we evaluate at cmips.

The first I notice is that there is a Free Voucher for trialling, of 300 $USD or 2 months. I notice also that Google Compute Engine charges per 10 minute intervals.

2016-06-30 Correction: Paul Nash from google was so kind to point me that I had an error in my understanding of the price politic. As he said “[…]we charge per minute, but there is a 10 minute initial minimum when the VM starts. After that it is per minute[…]”. Thanks for the clarification

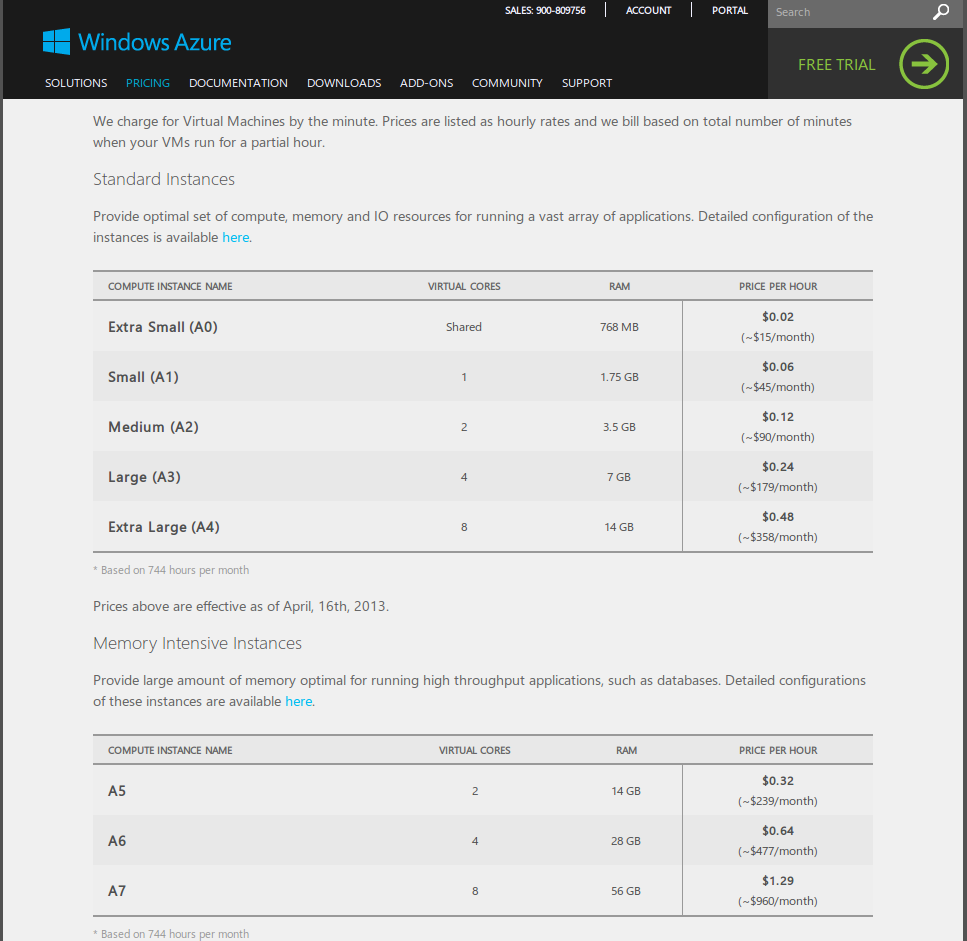

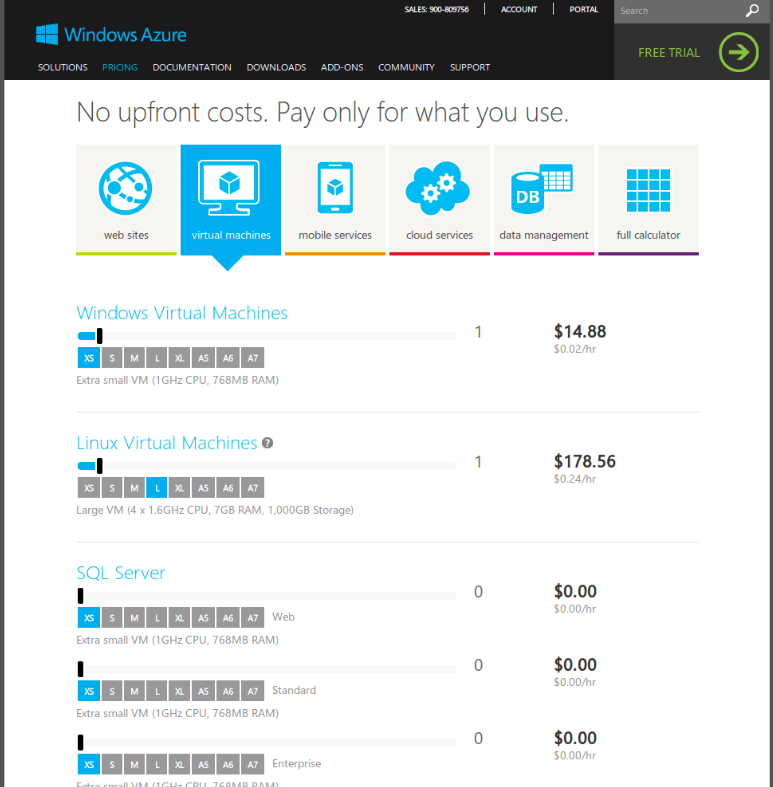

Some providers charge by the hour, few -like Microsoft Azure- by the minute. Few charge by the day.

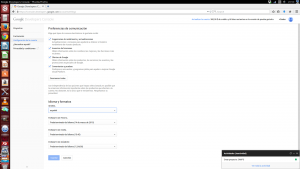

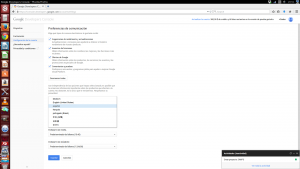

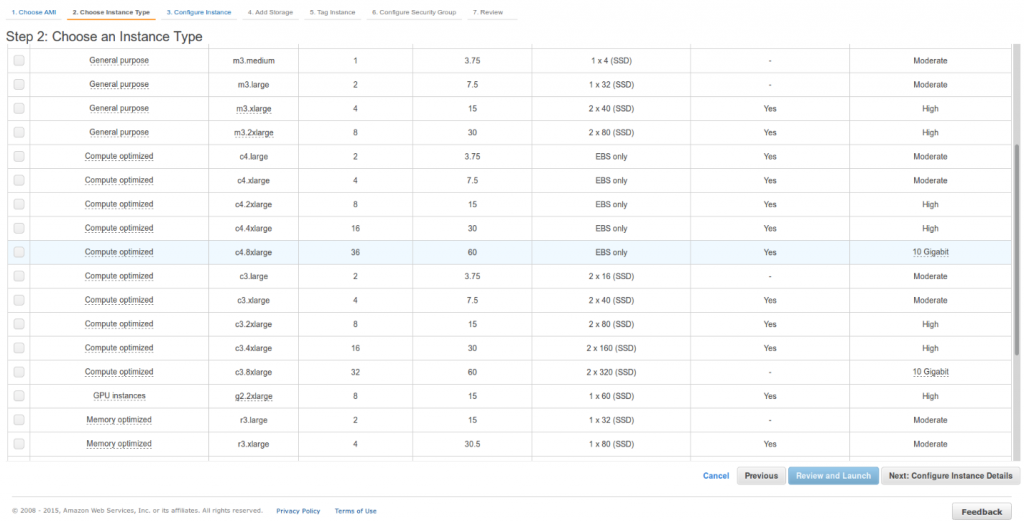

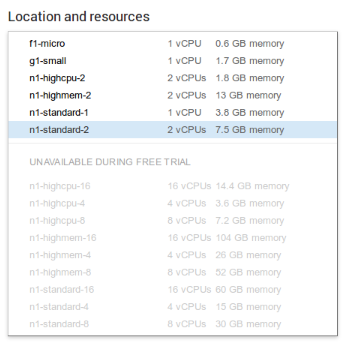

Like in the case of Amazon the Free Voucher is very limited. You can only launch smaller models. From f1-micro (1 core in Shared CPU) to n1-standard-2 (2 vCPU and 7.5 GB RAM).

As you can see the list is not sorted by power, but by name (so n1-highcpu-16 comes before n1-highcpu-4 because 1, from 16, goes before 4).

As you can see the list is not sorted by power, but by name (so n1-highcpu-16 comes before n1-highcpu-4 because 1, from 16, goes before 4).

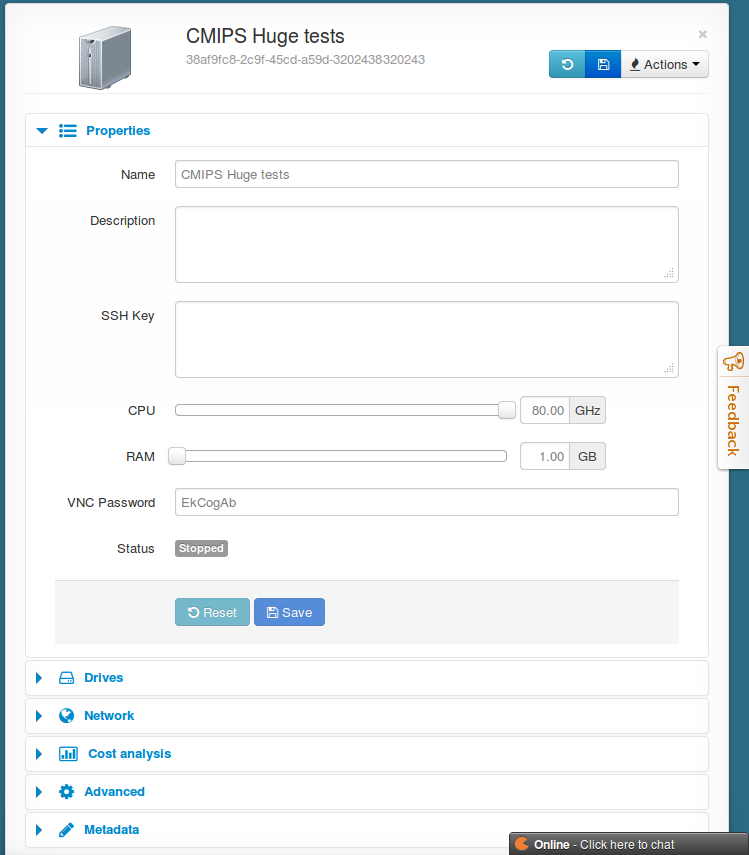

Like in the case of Amazon the models are pre-set: x vCPU, y GB RAM, etc… Only CloudSigma (that has its own hypervisor), LunaCloud (with less powerful servers) and few more allow you freely to customize the balance on the amounts of computing power and ram and so not paying for what you don’t need.

All the instances are 64 bit, Intel based. The only one we have found to have additional support for Sun (Oracle) Solaris SPARC is CloudSigma.

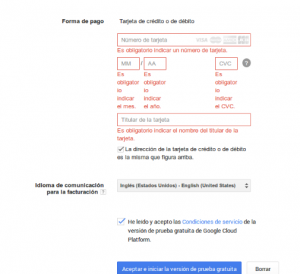

When I registered all the process came in Spanish. I’ve my google account in Catalan, but as it is not available automatically it showed everything. I would have preferred to browse all the process in English instead but there was no way to change the language for the registration process. That’s why some screen captures are in Spanish, until I was registered, and so able to switch to English.

Like in many providers, Visa card is required, although it is no charged. I guess that’s a measure for validation like Amazon does.

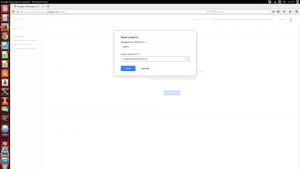

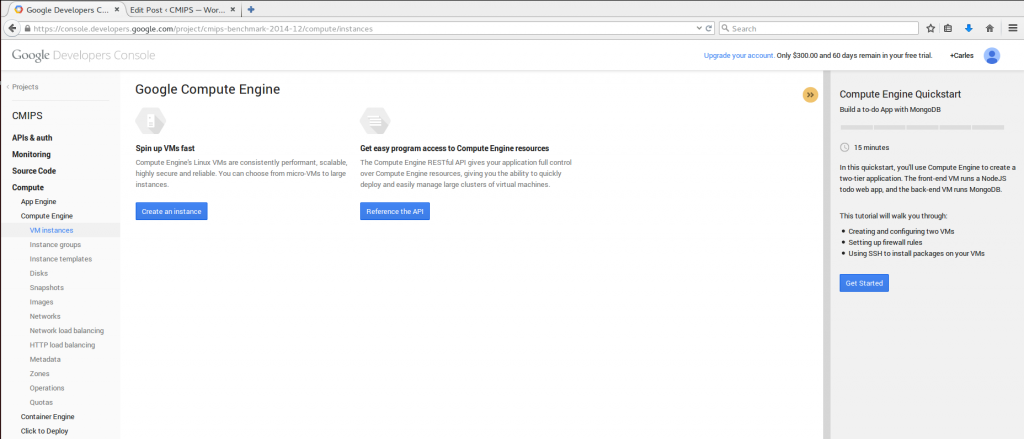

Once you have registered, to start, you must create a Project.

Once you have registered, to start, you must create a Project.

The interface clearly remembers me a mix between first versions of Amazon AWS and Microsoft Azure.

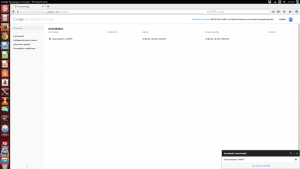

With the status widget down, it comes a very useful option, that is the ability to retry an operation that failed.

An interesting option for developers is that from the very first moment they have a wizard step by step to learn how to create an application with several servers and a MongoDb database.

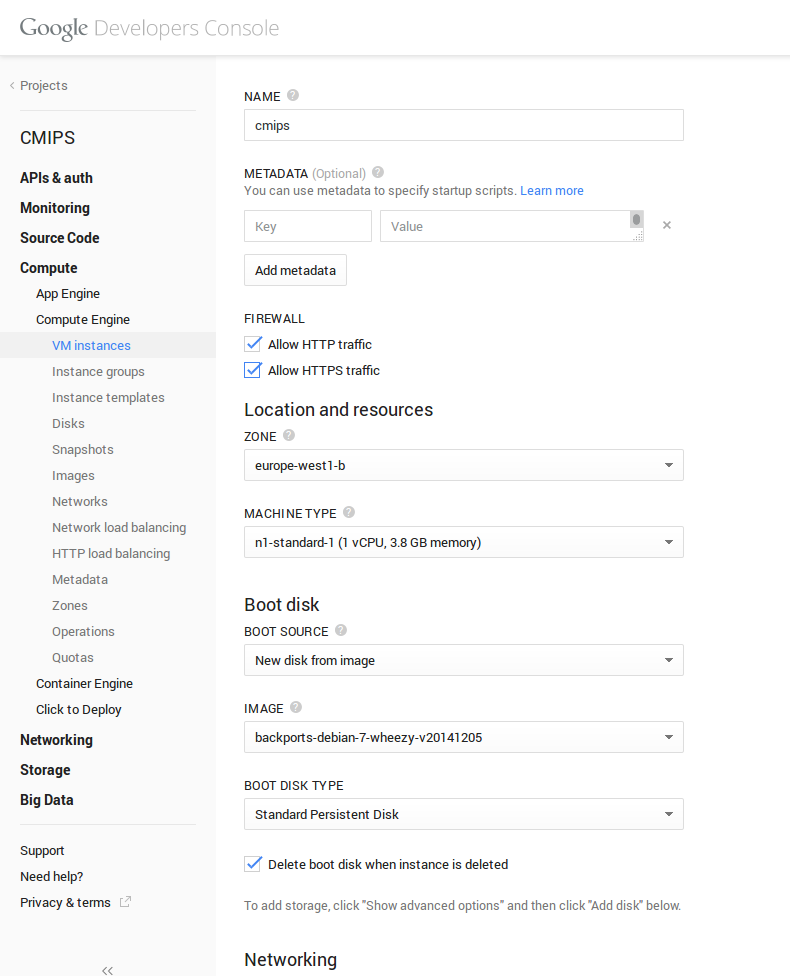

When launching an instance it is a very good point that they allow metadata (like cloudinit), in order to launch your scripts when the machine is created. That’s a good point.

It is powerful as concept but has a lot to improve, as it is really user unfriendly (take a look at ECManaged, or not so cool but Ok RightScale, or Amazon User Data to see why).

The easy of use, and a reasonable common sense for the easy to use of the advanced features like cloud-init/user data/metadata, portability and re-usability, is the missing point of most of the Cloud providers. Most developers have no clue on systems, base64, etc… so Cloud Systems have to make things easier and specially auto-scaling and error-proof. This is one of the points I insisted to Amazon when I was in their offices in Dublin.

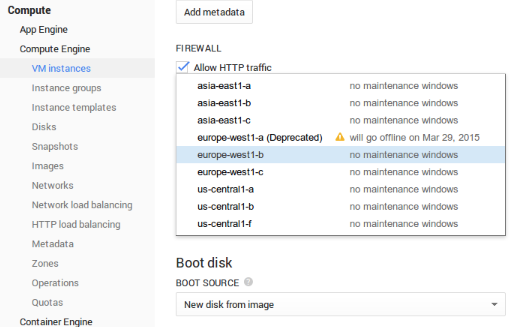

It is cool that in the zone selection drop-down, it is indicated the future maintenance windows. It’s always cool to know that there will be a maintenance if you plan to release something.

It is cool that in the zone selection drop-down, it is indicated the future maintenance windows. It’s always cool to know that there will be a maintenance if you plan to release something.

The base images are the most popular and updated very often. Date of the last creation of the images is provided clearly.

So when I installed my Ubuntu 14.10 Server images, and I did apt-get upgrade there was nothing to upgrade. Everything was up to date. The Ubuntu base image was created on 2014-12-17

The user interface is a bit Spartan. It looks to me like developers that first created the REST API, created a user friendly interface quickly just to sell the service, but in it’s nature is a REST/gcloud command line tool application. (Yes, they have a set of command-line tools, like Amazon has)

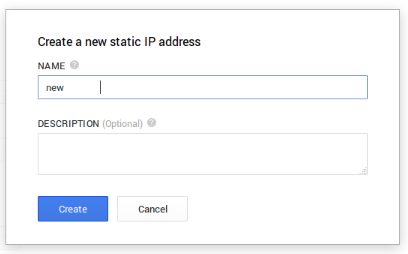

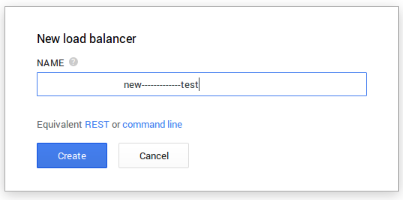

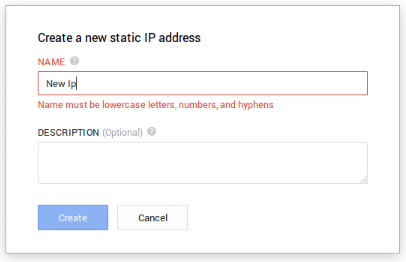

I say that because I was able to trick a bit the validations of the interface and despite spaces not being accepted, they “eat” them all if I put the spaces at the beginning, like ” Test”.

Also ” Eat this——————–” is accepted.

The spaces at the beginning are trimmed later, but I think a good user interface should take care of aspects like this. Tricky spaces at the end doesn’t raise an error.

It is annoying that at the beginning you don’t know that you can’t use spaces for the name of different object, and is a bit confusing that the name is used as the ID.

So if I send request via the RESTFul API, and I don’t know that an object with that name/id exists, the operation will fail. Amazon uses names as something nice for the user, but internally always works with ids (based on UUID).

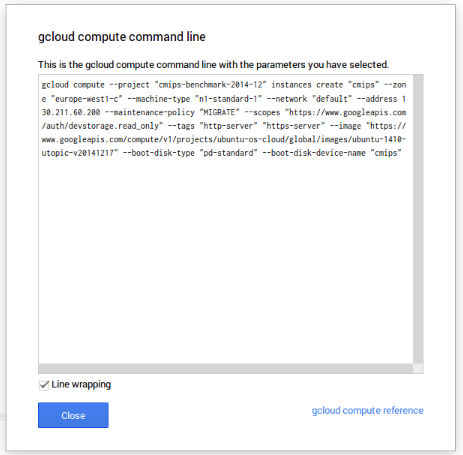

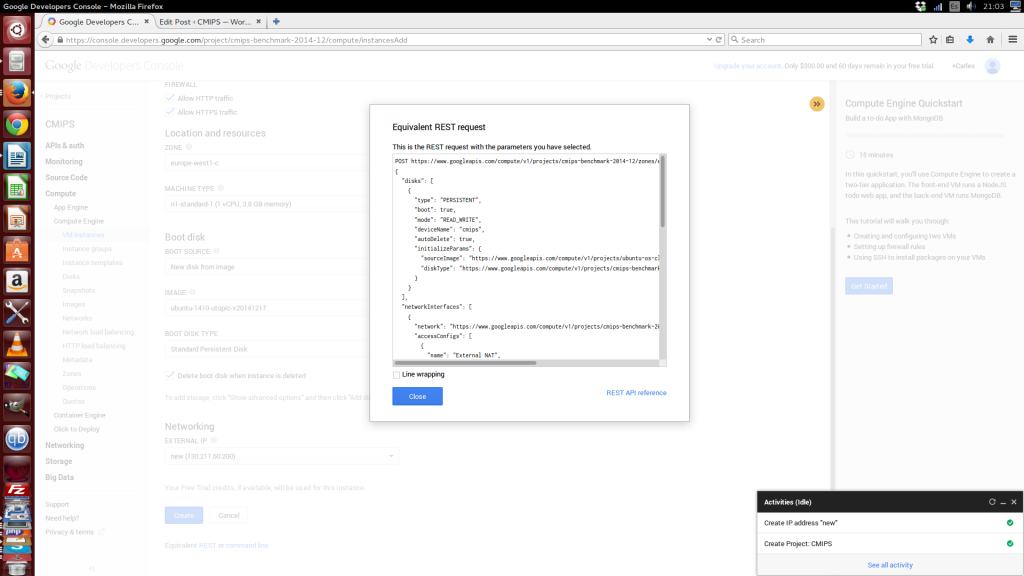

A very good point is that all the pages of the console, have the Equivalent REST command.

An improvement would be to be able to copy the REST message formatted, as it is copied without spaces (just for improved reading).

An improvement would be to be able to copy the REST message formatted, as it is copied without spaces (just for improved reading).

So you can easily adapt for your automatic processes.

POST https://www.googleapis.com/compute/v1/projects/cmips-benchmark-2014-12/zones/europe-west1-c/instances { "disks": [ { "type": "PERSISTENT", "boot": true, "mode": "READ_WRITE", "deviceName": "cmips", "autoDelete": true, "initializeParams": { "sourceImage": "https://www.googleapis.com/compute/v1/projects/ubuntu-os-cloud/global/images/ubuntu-1410-utopic-v20141217", "diskType": "https://www.googleapis.com/compute/v1/projects/cmips-benchmark-2014-12/zones/europe-west1-c/diskTypes/pd-standard" } } ], "networkInterfaces": [ { "network": "https://www.googleapis.com/compute/v1/projects/cmips-benchmark-2014-12/global/networks/default", "accessConfigs": [ { "name": "External NAT", "type": "ONE_TO_ONE_NAT", "natIP": "130.211.60.200" } ] } ], "metadata": { "items": [] }, "tags": { "items": [ "http-server", "https-server" ] }, "zone": "https://www.googleapis.com/compute/v1/projects/cmips-benchmark-2014-12/zones/europe-west1-c", "canIpForward": false, "scheduling": { "automaticRestart": true, "onHostMaintenance": "MIGRATE" }, "name": "cmips", "machineType": "https://www.googleapis.com/compute/v1/projects/cmips-benchmark-2014-12/zones/europe-west1-c/machineTypes/n1-standard-1", "serviceAccounts": [ { "email": "default", "scopes": [ "https://www.googleapis.com/auth/devstorage.read_only" ] } ] }

gcloud compute --project "cmips-benchmark-2014-12" instances create "cmips" --zone "europe-west1-c" --machine-type "n1-standard-1" --network "default" --address 130.211.60.200 --maintenance-policy "MIGRATE" --scopes "https://www.googleapis.com/auth/devstorage.read_only" --tags "http-server" "https-server" --image "https://www.googleapis.com/compute/v1/projects/ubuntu-os-cloud/global/images/ubuntu-1410-utopic-v20141217" --boot-disk-type "pd-standard" --boot-disk-device-name "cmips"

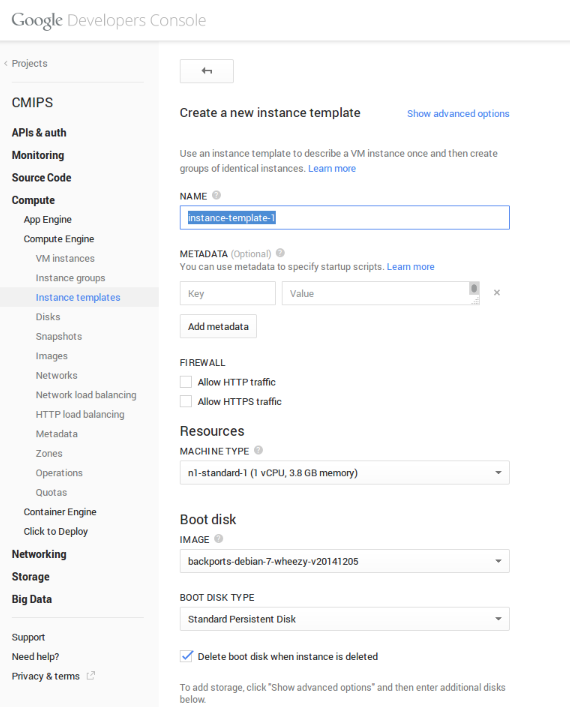

Instance templates are a bit like what I created for ECManaged, but google’s much much much less powerful. It allows to create a template for the most common aspects, like the base image, etc… but nothing to be with more portable, advanced and powerful solutions supporting deploying Base Software from puppet, chef, code from git/svn, running multiple scripts in order with error control and decision case…

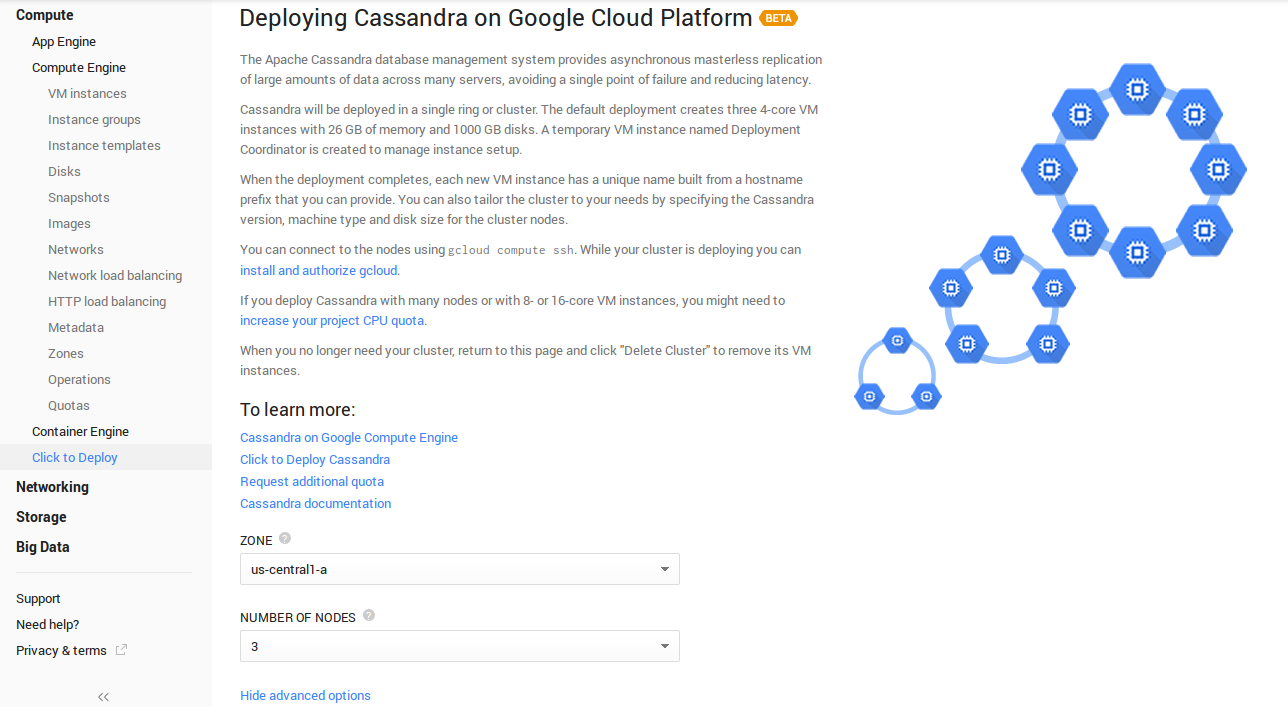

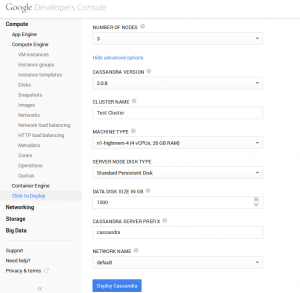

The Apps, a bit more customizable way to launch projects composed by several servers, where you can choose the number of nodes, version of Software, etc… are a fraction of the advanced functionalities I’m explaining about templating, but are in the good direction.

A Health check is incorporated, able to check http/https, and that’s always nice. I use several external professional services for that, and it’s cool to know that Google includes this for free.

Network options are cool, but complicated for newbies, allowing you to create private networks. In fact the instances have a private address that is mapped (NAT) to the public Ip. This is very important to know as some programs may require special configurations.

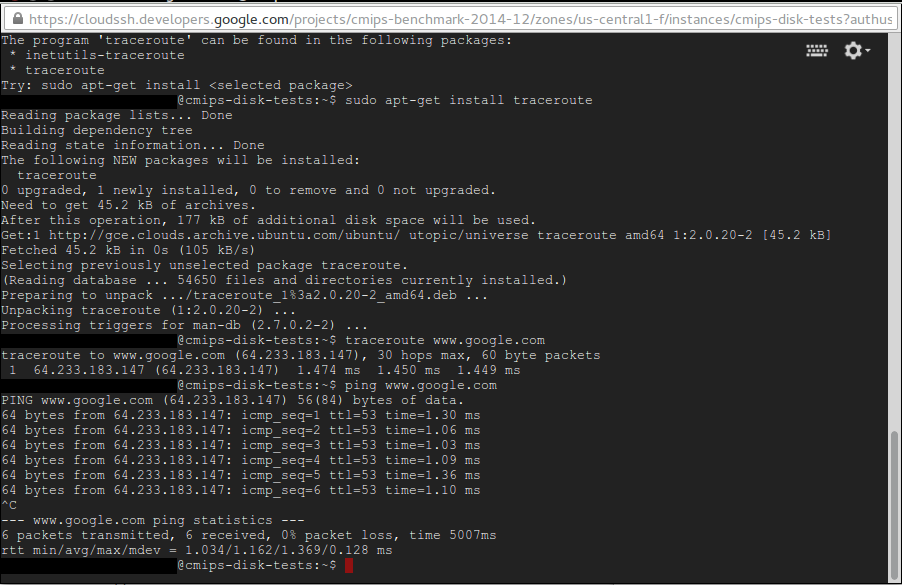

One thing that I think is an epic win about using google cloud is that your web will be in the same infrastructure as google, I mean that the times, the latency of the Tcp/Ip packets, between google and your instance are incredibly low. As you may know one of the things that is very important for SEO is the response time. With a super-low latency your website will always have faster response times to google. I was sondering so if this translates in that instances in google cloud would probably have a better Page Rank.

One thing that I think is an epic win about using google cloud is that your web will be in the same infrastructure as google, I mean that the times, the latency of the Tcp/Ip packets, between google and your instance are incredibly low. As you may know one of the things that is very important for SEO is the response time. With a super-low latency your website will always have faster response times to google. I was sondering so if this translates in that instances in google cloud would probably have a better Page Rank.

Doing traceroute brings you direct to google, doing ping returns 1 ms. This is really good for SEO.

I asked about this to the Team at google and they told me that:

I asked about this to the Team at google and they told me that:

“I asked a number groups about this one. The general consensus is we do nothing to favor sites hosted on GCP and any latency benefit if one existed will be negligible in terms of SEO.

I has not tested Google Cloud Storage but looks like an interesting option.

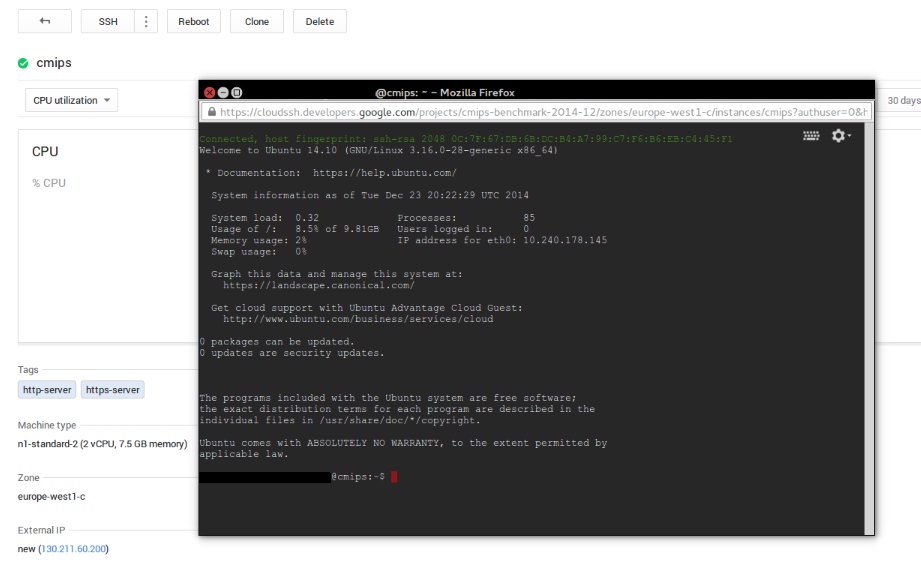

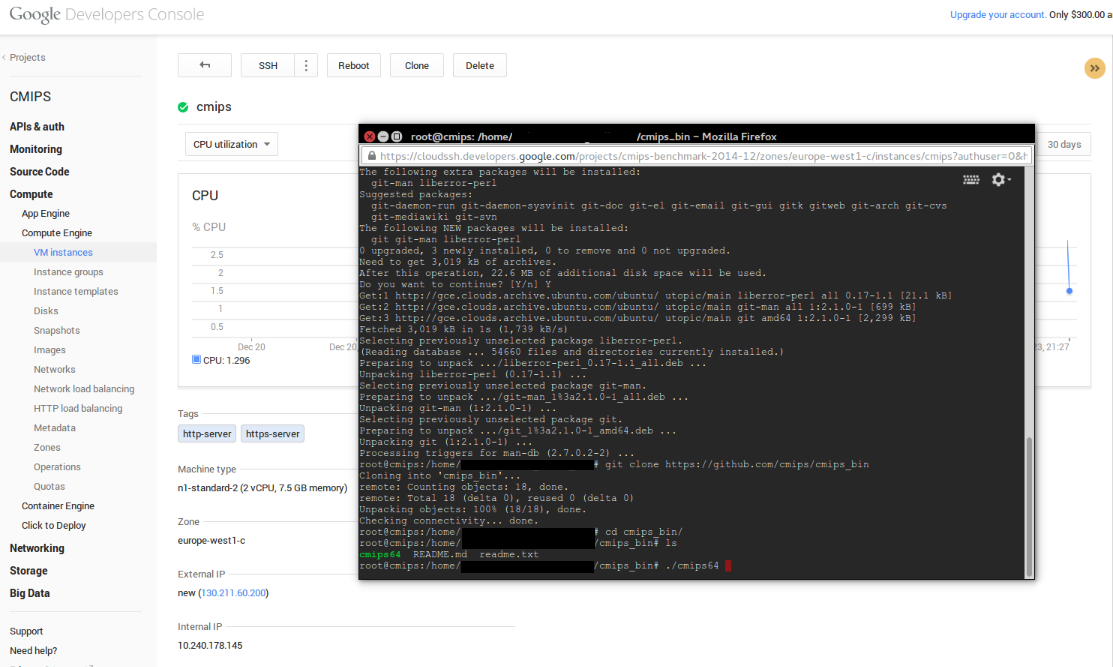

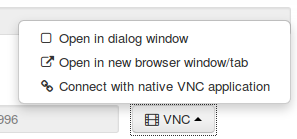

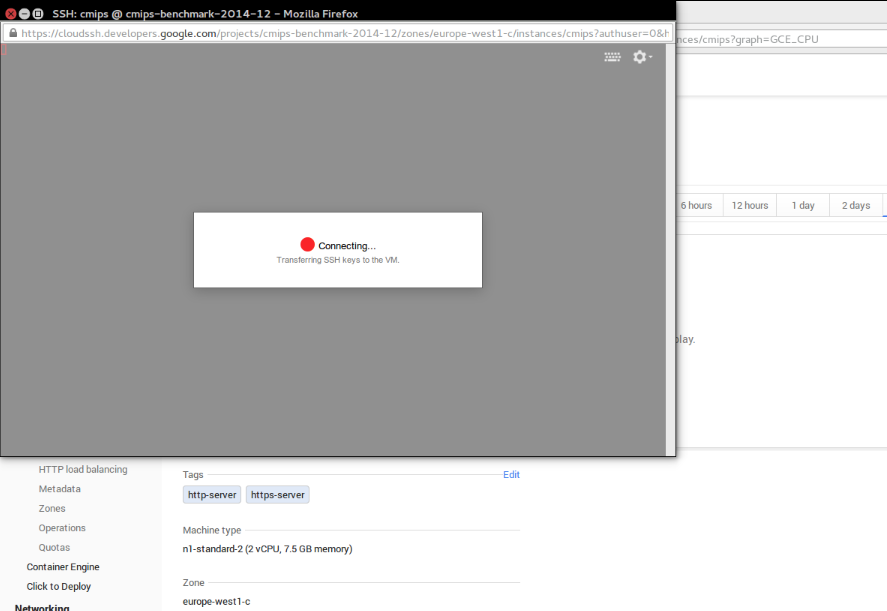

I did not found were to download the ssh key (cert) they have created by default or ability to create one on Google for the servers directly. Fortunately there is a Web SSH Client and the console sends the keys to Web SSH Client, so you can access the Server and add whatever you need. SSH port is open to worldwide by default. (And I can assure there are robots trying to access your Server from the very first moment)

The user for the connection is your email, so I’ve deleted that part from the image:

The user for the connection is your email, so I’ve deleted that part from the image:

That’s a bit disturbing when you notice, and will force developers taking screenshots to publish on the web to hide sections of the image like I did.

Must say the Web SSH client is one of the bests I’ve tested, supporting copy and paste (CTRL C, CTRL V), offering colors, etc…

I miss the right click, that I can use with the Amazon interface, that is not possible in google cloud web.

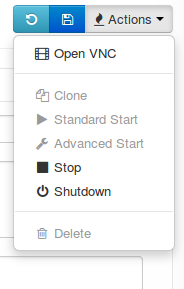

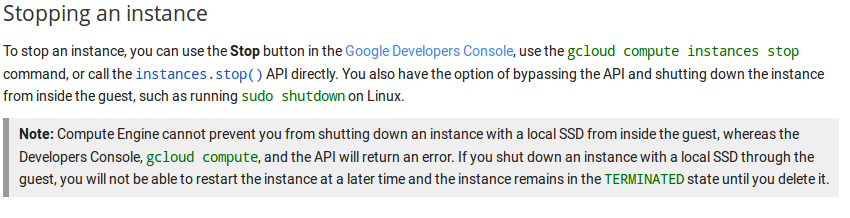

There is no STOP. Only Reboot and DELETE. You cannot stop an instance, and if you shutdown the server from command line the machine stops and you’re not able to restart it. Fortunately the disk is not deleted by manually shutdowning and you can generate a snapshot and launch a new instance using this snapshot.

Google just contacted me to tell me that the STOP button just got implemented. However documentation tells that:

So on SSD perhaps is not the best option.

So on SSD perhaps is not the best option.

Having no STOP is annoying. Many use snapshots for doing backups, but generating an snapshot of a live system, not stopped, could end in a corrupted mysql database in the snapshot. Really not appealing.

The Clone button is really confusing, as for real it does launching a new instance. One may except from this to have a snapshot and launch of new instance, but only clones the properties, like the base image used.

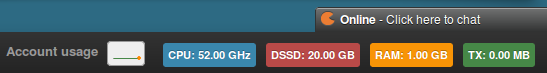

Price and remaining free account time, shown at the top, is not updated in real time.

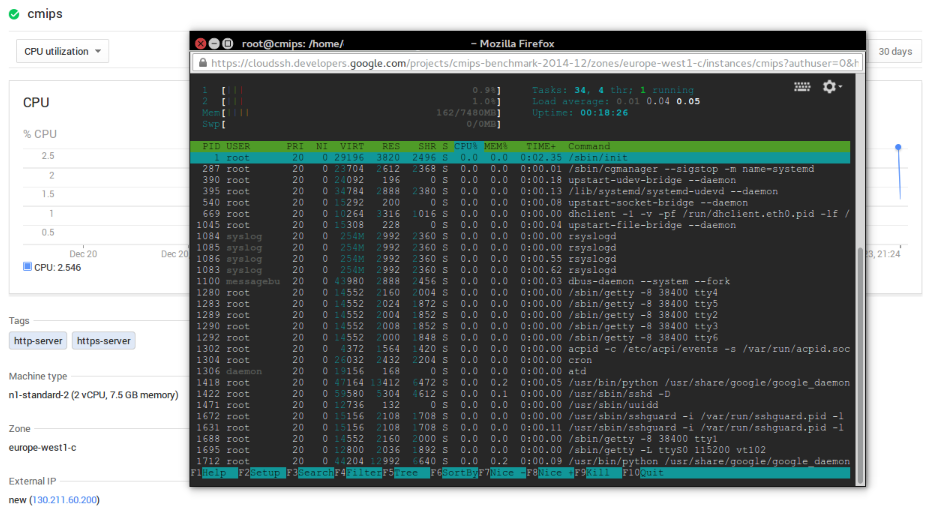

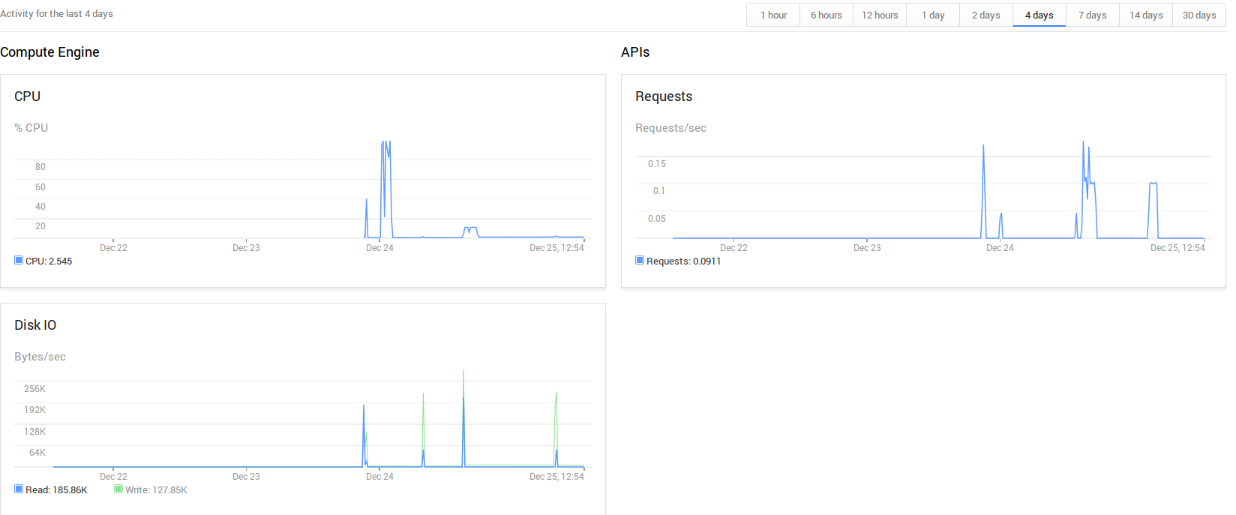

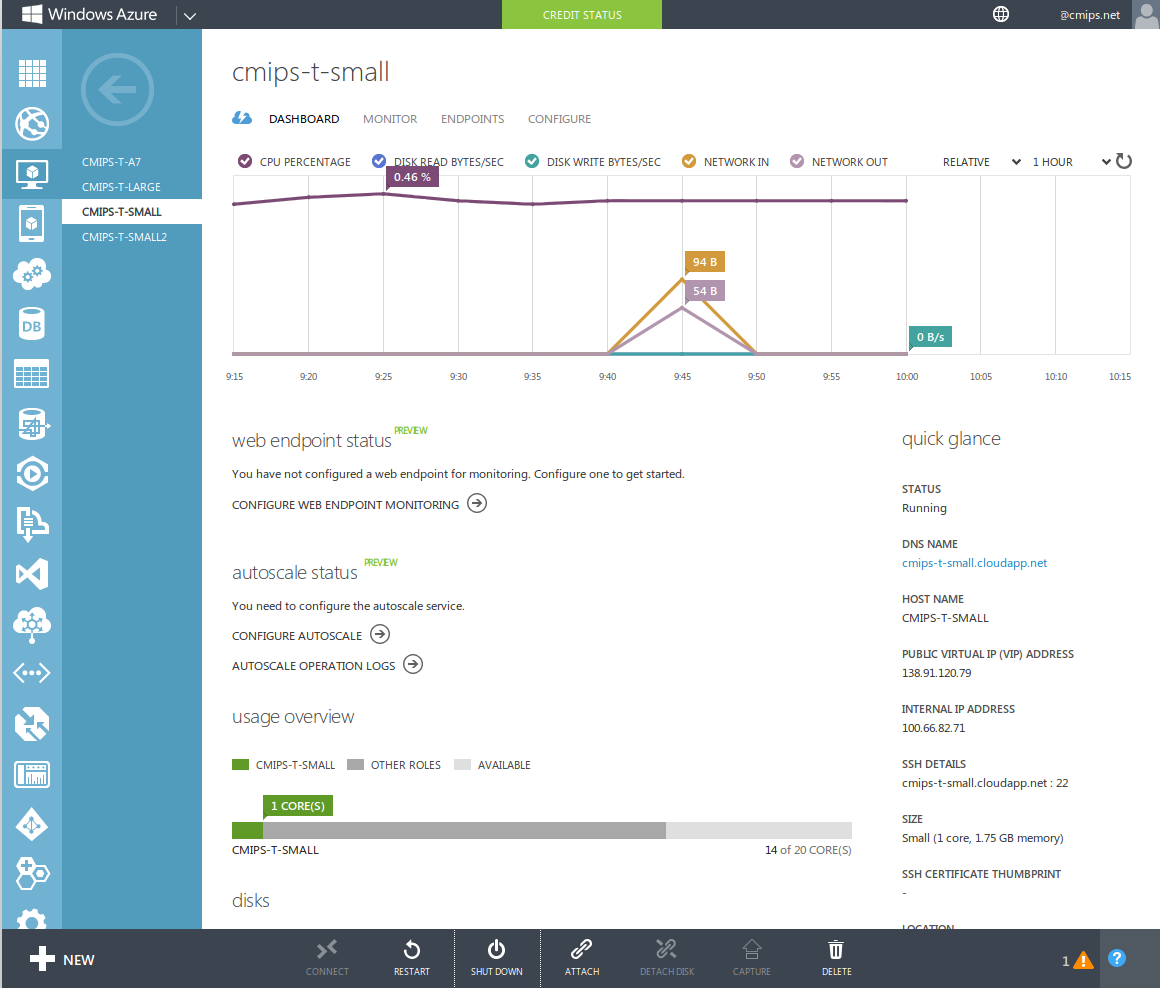

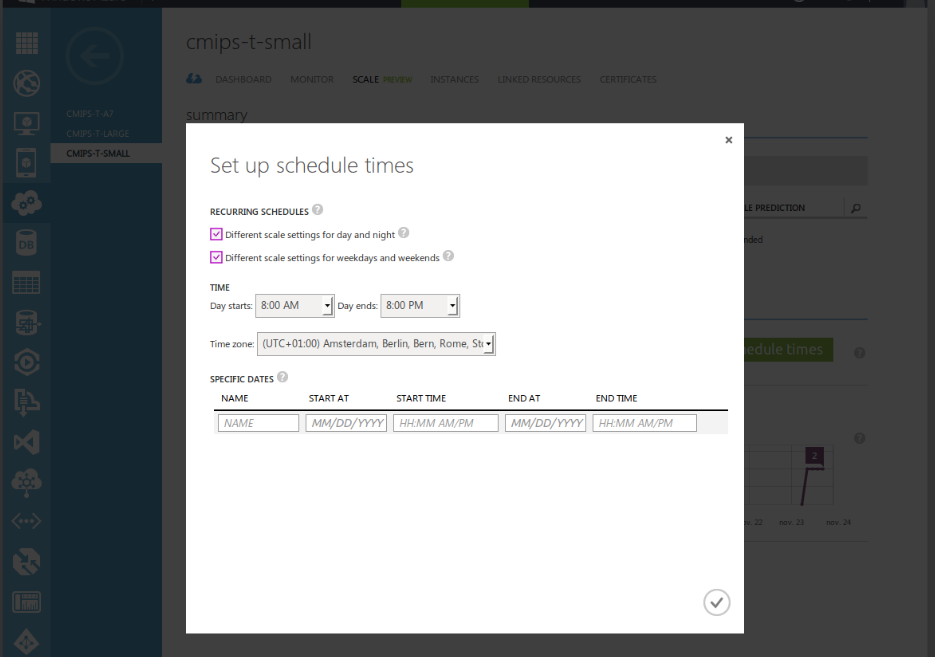

Graphical stats of CPU use are not accurate, as they are aggregated at Project Level, what is a mess if you deal with few VM’s instead of autoscaling apps, like in the case of Windows Azure. It works better that Azure but don’t trust it at all. It approximates and aggregates. One ends looking for one single server, and in the past hour.

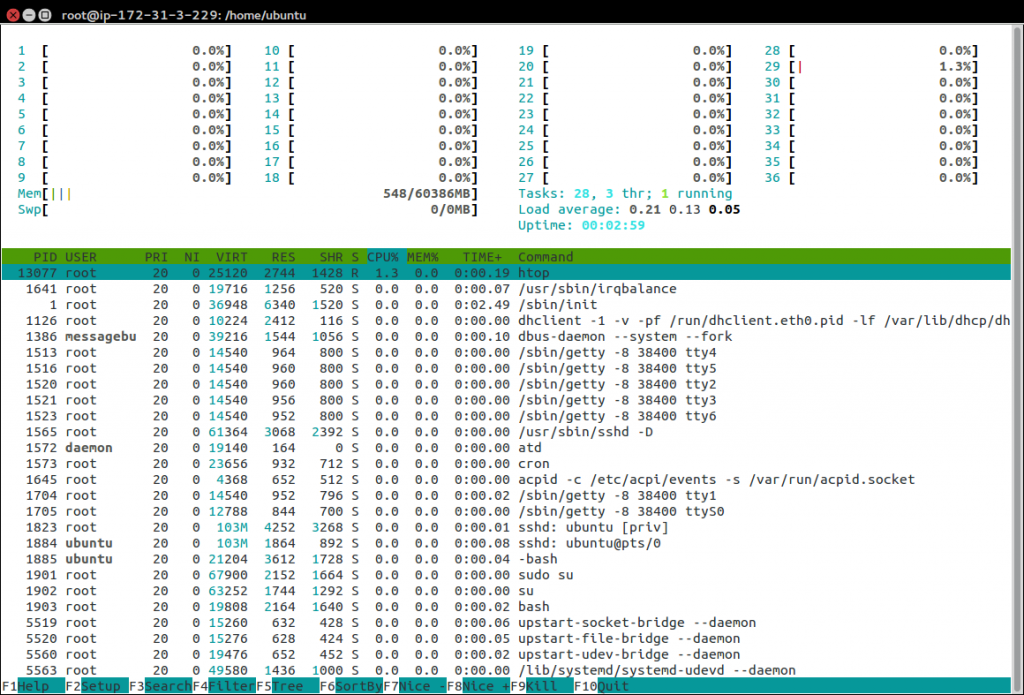

In this sample, when we benchmark the CPU is at 100%, all of them, see how confusing it is presented:

Resizing the instance -like you can do in Amazon- is not possible. You must create a snapshot and then launch a new instance from this.

sshguard is installed, blocking tries to hack. And they try from the very first moment.

Dec 24 19:41:25 cmips-1 sshguard[1590]: Blocking 211.129.208.67:4 for >630secs: 40 danger in 4 attacks over 6 seconds (all: 40d in 1 abuses over 6s).

Dec 25 01:12:25 cmips-1 sshd[14646]: reverse mapping checking getaddrinfo for huzhou.ctc.mx.fund123.cn [122.225.97.87] failed - POSSIBLE BREAK-IN ATTEMPT

The instances have swap disabled by default. I’m seeing this in several providers lastly, like Azure. I guess they do for performance, (to avoid having swap -that goes over the network- overloading the host and network) but it’s annoying to have to setup the swap for every instance. (having no swap is bad many times, as if it exceeds the threshold of available memory applications crash or are killed, swap is recommended in many cases)

I tried what happens if I pm-suspend the instance, nothing happens.

The web doesn’t refresh after certain operations like delete the instance.

I upgraded to the paid account, but in Europe I was not able to launch instances of more than 2 vCPU. There was no capacity it said. I had to test in US zones.

I had a similar issue recently within Amazon Cloud, in the new Datacenter Frankfurt, Germany, but with a much more powerful server, the c3.8xlarge. Is a good machine, but I was only able to launch up to c3.4xlarge due to not more capacity in Frankfurt it said, so I moved the project to Ireland where I had access to full horsepower.

They have a pricing calculator:

https://cloud.google.com/pricing/?hl=en_US

The price policy is really interesting:

Once you use an instance for over 25% of a billing cycle, your price starts dropping. This discount is applied automatically, with no sign-up or up-front commitment required. If you use an instance for 100% of the billing cycle, you get a 30% net discount over our already low prices.

https://cloud.google.com/compute/pricing#sustained_use

CPU reports to be a Intel Xeon at 2.50 Ghz, but this is intercepted by the hypervisor software. I can’t say certainly what CPU model they are using.

With the superior model n2-highcpu-16 the CPU is reported to be a Intel Xeon at 2.60 Ghz:

grep -i --color "model name" /proc/cpuinfo

The detailed report (of one core):

processor : 15 vendor_id : GenuineIntel cpu family : 6 model : 45 model name : Intel(R) Xeon(R) CPU @ 2.60GHz stepping : 7 microcode : 0x1 cpu MHz : 2599.998 cache size : 20480 KB physical id : 0 siblings : 16 core id : 7 cpu cores : 8 apicid : 15 initial apicid : 15 fpu : yes fpu_exception : yes cpuid level : 13 wp : yes flags : fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ss ht syscall nx pdpe1gb rdtscp lm constant_tsc nopl xtopology eagerfpu pni pclmulqdq ssse3 cx16 sse4_1 sse4_2 x2apic popcnt aes xsave avx hypervisor lahf_lm xsaveopt bogomips : 5199.99 clflush size : 64 cache_alignment : 64 address sizes : 46 bits physical, 48 bits virtual power management:

The solution is very complete, having incorporated the ability to create Network Load Balancers and HTTP Load Balancers for easily scaling.

With incorporated health check to determine if a Server behind the Load Balancer is healthy or not.

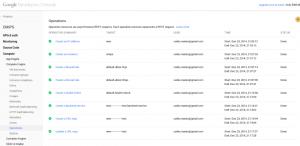

Another nice options are audit options:

An overview of what you have in each zone:

And very useful, to know your quota limits:

And very useful, to know your quota limits:

With the pertinent REST call:

{ "kind": "compute#project", "selfLink": "https://www.googleapis.com/compute/v1/projects/cmips-benchmark-2014-12", "id": "9164686651607534211", "creationTimestamp": "2014-12-23T11:55:50.451-08:00", "name": "cmips-benchmark-2014-12", "commonInstanceMetadata": { "kind": "compute#metadata", "fingerprint": "m4NhA9a-F04=" }, "quotas": [ { "metric": "SNAPSHOTS", "limit": 1000, "usage": 0 }, { "metric": "NETWORKS", "limit": 5, "usage": 1 }, { "metric": "FIREWALLS", "limit": 100, "usage": 6 }, { "metric": "IMAGES", "limit": 100, "usage": 0 }, { "metric": "STATIC_ADDRESSES", "limit": 7, "usage": 0 }, { "metric": "ROUTES", "limit": 100, "usage": 2 }, { "metric": "FORWARDING_RULES", "limit": 50, "usage": 0 }, { "metric": "TARGET_POOLS", "limit": 50, "usage": 0 }, { "metric": "HEALTH_CHECKS", "limit": 50, "usage": 1 }, { "metric": "IN_USE_ADDRESSES", "limit": 23, "usage": 0 }, { "metric": "TARGET_INSTANCES", "limit": 50, "usage": 0 }, { "metric": "TARGET_HTTP_PROXIES", "limit": 50, "usage": 0 }, { "metric": "URL_MAPS", "limit": 50, "usage": 1 }, { "metric": "BACKEND_SERVICES", "limit": 50, "usage": 1 } ] }

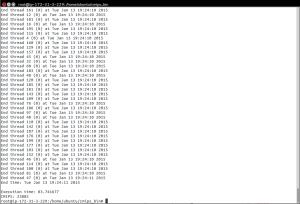

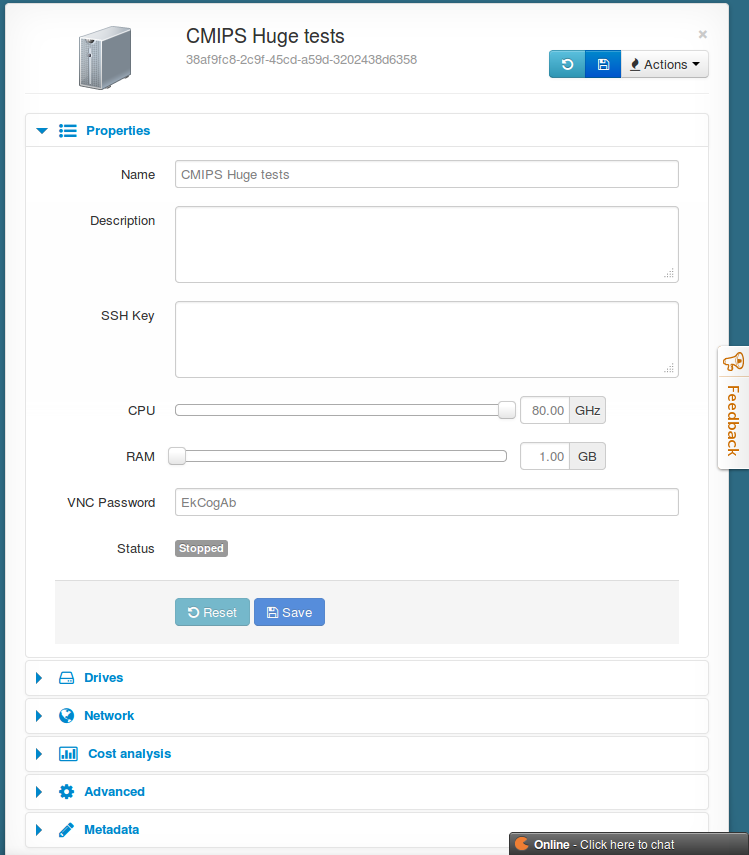

For the tests of performance I clone cmips v.1.0.5:

git clone https://github.com/cmips/cmips_bin

And launched several instances and did several tests.

Must say that instances start really fast. That’s a very good point when auto-scaling.

As the Free Account allowed me a max. of n1-standard-2 (2 vCPU, 7.5 GB memory) in the Zone europe-west1-c I upgraded my account and paid for the tests.

We tested these instances:

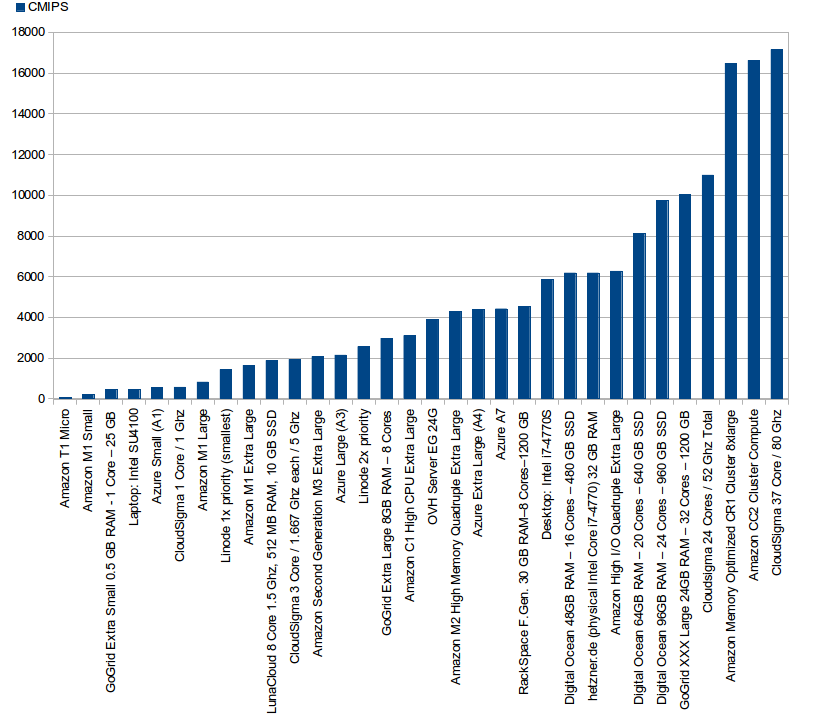

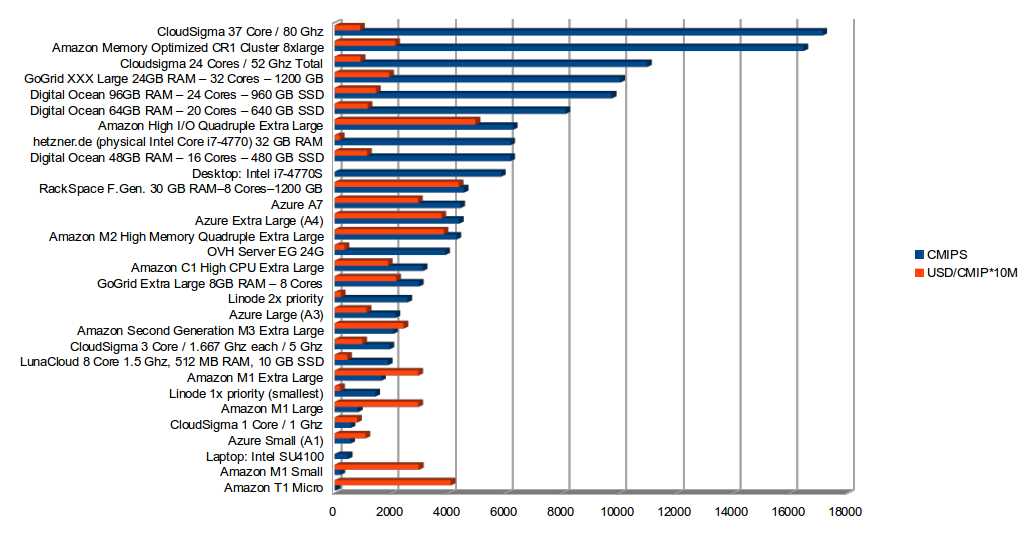

- Google Cloud f1-micro (1 vCPU, 0.6 GB RAM, Google Compute Engine Units: Shared CPU), scoring 150 CMIPS

- Google Cloud g1-small (1 vCPU, 1.7 GB RAM, Google Compute Engine Units: 1.38), scoring 363 CMIPS

- Google Cloud n1-highcpu-2 (2 vCPU 1.8 GB RAM, Google Compute Engine Units: 5.5), scoring 1046 CMIPS

- Google Cloud n1-standard-2 (2 vCPU 7.5 GB RAM, Google Compute Engine Units: 5.5), scoring 1093 CMIPS

- Google Cloud n1-highcpu-8 (8 vCPU 7.2 GB RAM, Google Compute Engine Units: 22), scoring 4656 CMIPS

- Google Cloud n1-highcpu-16 (16 vCPU 14.4 GB RAM, Google Compute Engine Units: 44), scoring 9357 CMIPS

- Google Gloud n1-standard-16 (16 vCPU 60 GB RAM, Google Compute Engine Units: 44), scoring 9347 CMIPS

The ending number reveals the number of vCPU. In the case of the f1-micro as the CPU is shared, there is no number. The highcpu has the same CPU power than the same number of cores called standard, but the standard has more RAM.

To get a relative idea, quickly, my old laptop equipped with a dual core Intel SU4100 processor scores 460 CMIPS, and my Desktop tower, with a Intel i7-4770S and 8 cores, scores 5842 CMIPS.

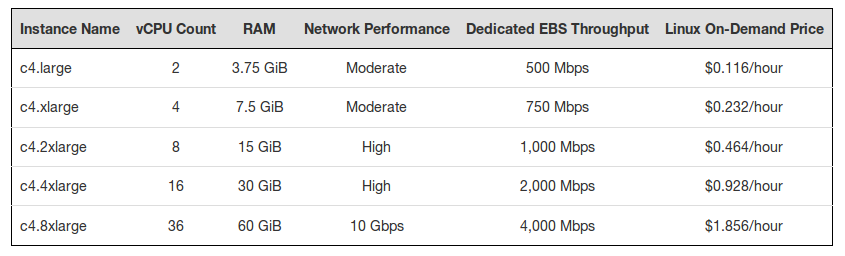

Network will be dependent on the number of cores and the power of the instance as it is described on the documentation.

Egress throughput caps

Outbound or egress traffic from a virtual machine is subject to maximum network egress throughput caps. These caps are dependent on the number of cores that a virtual machine has. Each core is subject to a 2 Gbits/second cap. Each additional core increases the network cap. For example, a virtual machine with 4 cores has a network throughput cap of 2 Gbits/sec * 4 = 8 Gbits/sec network cap.

Virtual machines that have 0.5 or fewer cores, such as shared-core machine types, are treated as having 0.5 cores, and a network throughput cap of 1 Gbit/sec. All caps are meant as maximum possible performance, and not sustained performance.

Network caps are the sum of persistent disk write I/O and virtual machine network traffic. Depending on your needs, you may need to make sure your virtual machine allows for your desired persistent disk throughput. For more information, see the Persistent Disk page.

A sad note. I understand the reasons, but blocking outgoing traffic to port 25 is a deal breaker for most of the companies. The documentation tells:

Blocked traffic

Compute Engine blocks or restricts traffic through all of the following ports/protocols between the Internet and virtual machines, and between two virtual machines when traffic is addressed to their external IP addresses through these ports (this also includes load-balanced addresses).

- All outgoing traffic to port 25 (SMTP) is blocked.

- Most outgoing traffic to port 465 or 587 (SMTP over SSL) is blocked, except for known Google IP addresses.

- Traffic that uses a protocol other than TCP, UDP, and ICMP is blocked, unless explicitly allowed through Protocol Forwarding.

I tried and yes, from the instance I was unable to go to other servers outside google’s network at port 25. Is sad, because everyone needs to send email to a email server, even a WordPress send emails with the password to their users.

I asked google about that and at least offer some possible solutions:

This is a protection against spam as all mail ports are blocked.

There are two ways to handle this. One is to use one of the proxy services we list in the help. The other is to contact Google and ask for it to be unblocked.

Disk performance

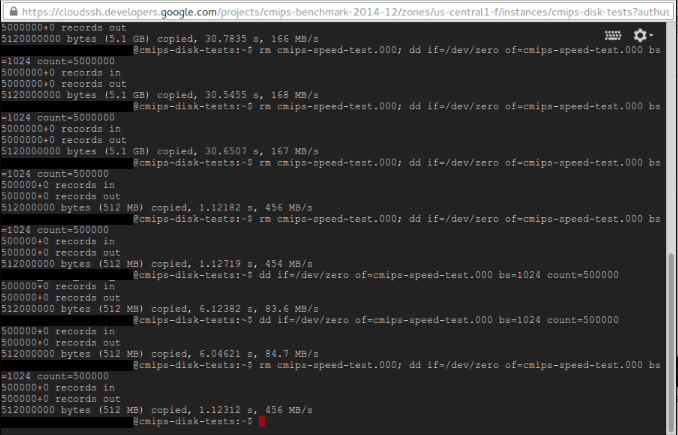

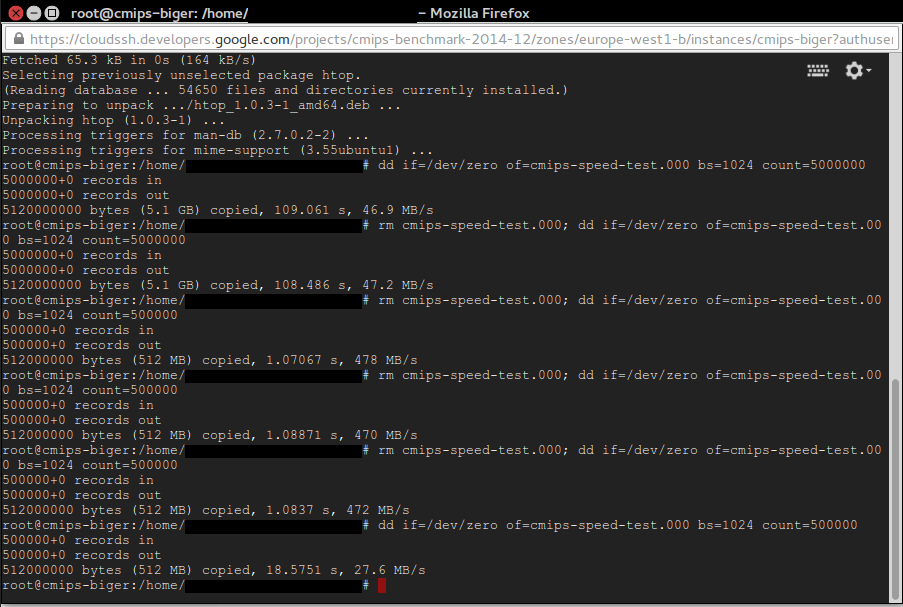

Tested the disk performance using a instance: n1-highcpu-16.

As a google Performance Lead, told me, dd is not the best tool to try the performance and suggested the use of FIO:

“The problem is most I/O just gets cached in memory which makes is really hard to draw any conclusions from. I do see others using it and have been doing my best to steer them to fio. FIO is a tool that allows you the most control over the experiment. You can control queue depth size (ie number of outstanding IO’s), the size of ios, whether or not they bypass the Filesystem cache (ie direct IO), etc.”

As I explained him, and noted in other articles, I use dd for writing, with sizes of 5 GB because many hypervisors (the Software that runs on the physical server and that manages the guest instances) implement some disk cache, to offer a best performance. The hypervisor then handles when the data is effectively sent to the network Storage. As the purpose of CMIPS is offering realistic info to the Start ups about what they can expect from the Cloud providers and what not, sending 5 GB to write overruns the different layers of cache that may exist and offers a more trustworthy impression of which sustained data is really supported.

The performance for the standard disk I/O for writing was average to very good.

I got from 50 MB/s to 167 MB/s in writing, with different tests creating a 5 GB file with zeros to the disk, and 456 MB/s with 500 MB or 50 MB files. Despite it tells “standard disk” and induces one to think on magnetic disk, it looks to me like those are also SSD disks. Perhaps not so performant, but good enough. One of the reasons we do the tests with big files is to avoid fake data coming from hypervisors caching the writing to disk and reporting to the guest system that as done, and so reporting unreal speedy times for small files, and if te Architects trust those this causing problems when for real the system has to withstand a high I/O.

The exact command executed to test 5 GB file was:

dd if=/dev/zero of=cmips-speed-test.000 bs=1024 count=5000000

Please note: the different times when we do rm before the dd, or when we overwrite the file.

With 5 GB, 500 MB, 50 MB, 5 MB.

Then I tried the SSD and it was really disappointing.

For 5 GB I got between 24.1 MB and 32.6 MB per second, in tests another day a max of 47.2 MB/s. That was bad. Honestly, I expected much much better results and I was worried about it.

Could be because the SSD nature, TRIM Empty Blocks vs Partially Filled Blocks but many providers solve this and offer much much higher speeds. Working with network storage is always challenging at those scales but it has no sense that magnetic disks perform much more better than SSD disks.

Actually 24-47 MB/second for a SSD is unacceptable.

On my dedicated Servers with Hardware Raid 5 of SSD I get plus than 1000 MB per second in writing, and Amazon is offering around 550 MB/second in writing, no matter if I do the test with dd for 5 GB, 500 MB, 50 MB or 5 MB.

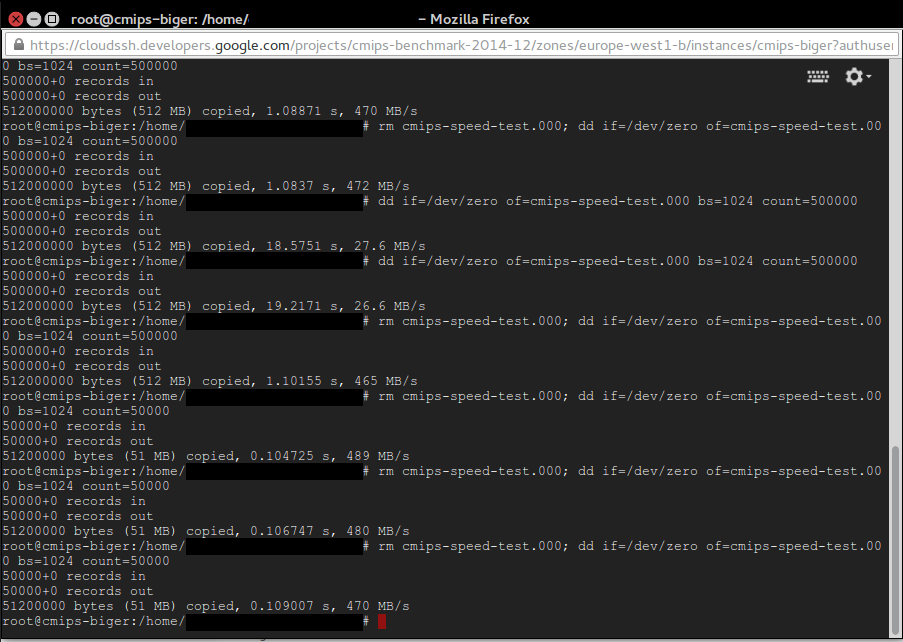

Fortunately this was only happening with the 5 GB files.

When I tested with a file with size of 500 MB or 50 MB results are good as expected, around 470-490 MB/s.

Note: To get the best result on writing tests, do not overwrite an existing file, create a new one, or delete the old one before dd. That’s because the TRIM nature of the SSD disks that makes necessary to erase old content before writing, and that is slow in terms of cycles. Some providers avoid that need, others don’t. When I do in Amazon I lost 50% of performance, so having aroung 270 MB/s instead of 500 MB/s, but when I do in Google it drops from 478 MB/s to 27.6 MB/s!. See the picture attached.

rm cmips-speed-test.000; dd if=/dev/zero of=cmips-speed-test.000 bs=1024 count=5000000

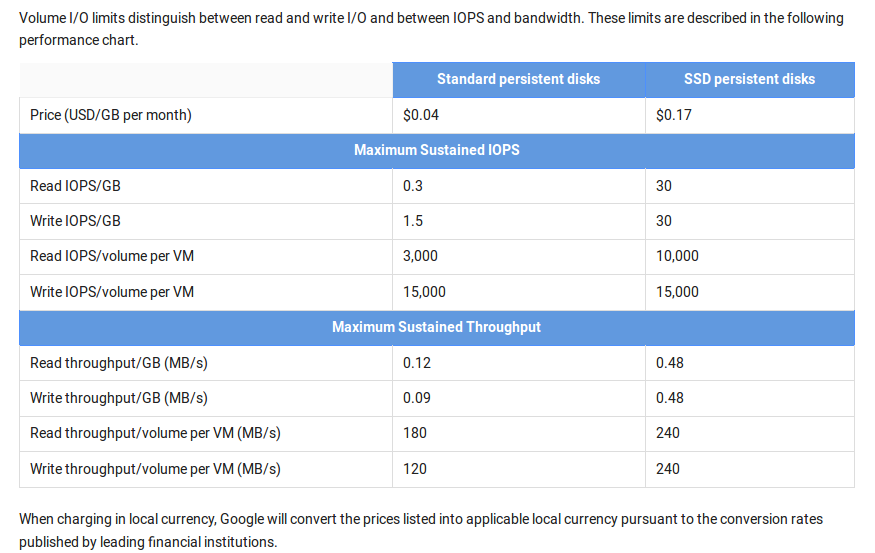

We also discussed this with the wonderful Engineering crew at google, and they explained me that the IO performance is provisioned according to the size of the disk of the instance. They also referred me to the documentation about disk, that is really excellent. You must read it to fully understand the google Cloud potential, as it also offers burst, for example for instances with small disk and few IO, but that will benefit from those punctual needs to I/O and burst the speed.

We also discussed this with the wonderful Engineering crew at google, and they explained me that the IO performance is provisioned according to the size of the disk of the instance. They also referred me to the documentation about disk, that is really excellent. You must read it to fully understand the google Cloud potential, as it also offers burst, for example for instances with small disk and few IO, but that will benefit from those punctual needs to I/O and burst the speed.

From the docs, in order to try to estimate predictable performance results:

Performance depends on I/O pattern, volume size, and instance type.

As an example of how you can use the performance chart to determine the disk volume you want, consider that a 500GB standard persistent disk will give you:

- (0.3 x 500) = 150 small random reads

- (1.5 x 500) = 750 small random writes

- (0.12 x 500) = 60 MB/s of large sequential reads

- (0.09 x 500) = 45 MB/s of large sequential writes

IOPS numbers for SSD persistent disks are published with the assumption of up to 16K I/O operation size.

Compute Engine assumes that each I/O operation will be less than or equal to 16KB in size. If your I/O operation is larger than 16KB, the number of IOPS will be proportionally smaller. For example, for a 200GB SSD persistent disk, you can perform (30 * 200) = 6,000 16KB IOPS or 1,500 64KB IOPS.

Conversely, the throughput you experience decreases if your I/O operation is less than 4KB. The same 200GB volume can perform throughput of 96 MB/s of 16 KB I/Os or 24 MB/s of 4KB I/Os.

To be fair in my tests the disk was the smaller possible to hold the Ubuntu image and to generate the 5 GB files, so around 8 GB.

Also the size of the block size being written is important. In my tests I used 1 KB (bs=1024), that is typical for logs. Other options for typical programs are 4 KB or 8 KB, 16 KB. The tests on disk performance requires a set of own tests and articles. As the scenario can change completely using other software like Hadoop that uses 64 MB Blocks or bigger, and writes large chunks.

Also, as indicated before, network caps are applied for Storage + Network traffic, so if you deal with heavy traffic, or plan to serve video on demand, you’ll benefice from launching less bigger instances rather than much smaller instances. This is very important to take into consideration for Cassandra clusters, for example.

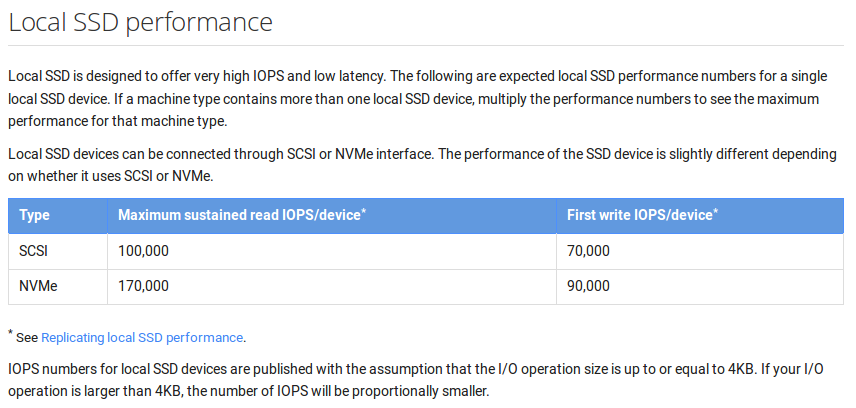

Just after I finished this article I read by RSS that 7 hours ago new SSD local storage has been released for Google cloud. Local SSD is available for gcloud command line tool version 0.9.37 and higher.

While I was going to publish the article a new service, in beta, has just been presented: the google Cloud Monitoring.

I love that it can send you alerts from SMS. Also email, PagerDuty, HipChat. It claims to “provide insight into many common open source servers with minimal configuration. Understand trends unique to your Cassandra cluster or Nginx servers.”

Thanks to Anthony F. Voellm for the interesting discussion on google performance and tools, and for putting all his Team and his colleagues into solving the most cutting edge doubts I raised, as well as for sharing with his coworkers, Product Managers and other Teams this analysis, and the niceness about having this feedback.